Driving AI Forward: Power Efficiency and Security as Core Pillars

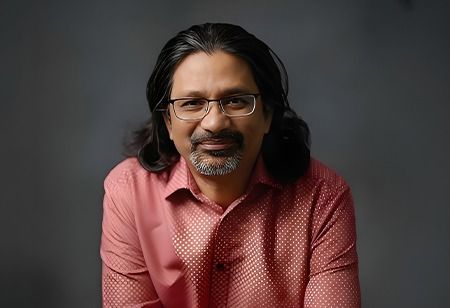

Gopi Sirineni, Founder & CEO of Axiado, is an accomplished leader with verified expertise in implementing strategies that drive companies forward, resulting in sustainable growth and market leadership. He has established highly effective and economical organizations, fostered technological advancements, enhanced capabilities, and promoted business excellence.

Artificial intelligence has rapidly moved from experimental environments into the operational core of modern organizations. Recommendation engines influence consumer behavior, machine learning models guide financial decisions, and AI-driven analytics now shape everything from healthcare diagnostics to supply chain planning. As this transition accelerates, the conversation around AI is beginning to shift. Organizations are recognizing that the true performance of AI systems depends not only on algorithms or data, but also on the underlying system architecture, silicon design, and security mechanisms that support them.

Behind every AI breakthrough lies an enormous amount of computation. Training and deploying modern AI models requires specialized hardware, high-performance data pipelines, and systems capable of processing vast volumes of information continuously. As models grow larger and workloads expand, the infrastructure supporting them must handle increasing power consumption, higher thermal loads, and constant data movement across networks and memory systems.

This shift is bringing two design considerations into sharper focus: power efficiency and system security. Historically, these priorities were addressed separately. Today they are increasingly interconnected. As AI becomes embedded in critical enterprise systems and national digital infrastructure, inefficient or insecure system design can introduce risks that extend far beyond technology teams. Infrastructure choices now directly influence operational resilience, cost structures, and organizational trust.

The Power Challenge Behind AI Expansion

AI workloads are among the most computationally demanding tasks organizations operate today. Training large models requires massive parallel processing across GPUs, accelerators, and high-bandwidth memory systems. Even inference workloads, particularly those operating in real time, generate sustained demand on processors and data pipelines.

This level of compute intensity translates directly into energy consumption. Large AI data centers can draw megawatts of power, and even marginal inefficiencies in system architecture can accumulate into substantial operational costs over time. As enterprises expand their AI capabilities across multiple business functions, energy consumption is becoming a growing component of infrastructure budgets.

Power usage also has broader implications. Many organizations now measure technology operations against sustainability commitments and environmental benchmarks. AI infrastructure that delivers higher performance per watt can help reduce operational expenses while supporting these broader organizational goals.

The challenge becomes even more complex outside traditional data centers. Increasingly, AI is being deployed closer to where data is generated. Manufacturing environments, connected vehicles, smart infrastructure, and industrial systems rely on edge computing where power availability is often constrained. In these environments, efficient system design determines whether AI deployments remain viable at scale.

Security as a Foundational Design Requirement

While efficiency ensures operational sustainability, security ensures trust. AI systems frequently process sensitive information, including financial transactions, personal data, proprietary research, and critical operational intelligence. A compromise within the infrastructure supporting these workloads can have far-reaching consequences.

Many organizations still approach security primarily through software-based controls and network monitoring. However, the threat landscape is evolving. Attackers increasingly target the lower layers of computing systems, including firmware, hardware interfaces, and system controllers. Vulnerabilities at these levels can expose infrastructure in ways traditional security tools may struggle to detect.

As a result, protecting AI infrastructure increasingly requires hardware-rooted security mechanisms embedded directly within system architecture. Capabilities such as secure boot processes, trusted execution environments, encrypted memory, and protected communication channels between compute components help ensure that workloads remain protected throughout their lifecycle.

Embedding these protections into system design also addresses a practical challenge. Retrofitting security into infrastructure after deployment is often expensive and incomplete. Integrating security from the outset enables organizations to maintain performance while reducing the likelihood of vulnerabilities emerging later.

Balancing Performance, Efficiency and Trust

Designing infrastructure for AI is becoming a sophisticated architectural challenge. Modern computing environments combine CPUs, GPUs, AI accelerators, and high-bandwidth memory subsystems, each with distinct performance characteristics and power profiles.

Optimizing these systems requires more than maximizing raw compute capacity. Engineers must consider how workloads are distributed across components, how systems behave under sustained demand, and how energy consumption evolves during continuous operations. Techniques such as intelligent power management, dynamic voltage and frequency scaling, and advanced thermal optimization are becoming essential to maintaining efficient performance.

Also Read: Transformative Shifts Reshaping Enterprise Defence in India

Security considerations introduce an additional layer of complexity. Sensitive workloads may require isolation, encrypted data paths, and secure key management. Communication between processors, accelerators, and memory systems must be protected against tampering or interception. When integrated thoughtfully into system architecture, these protections can coexist with high-performance computing environments without introducing significant latency.

The most effective infrastructure designs therefore treat performance, efficiency, and security as complementary objectives rather than competing priorities.

Trust Across the AI Infrastructure Supply Chain

Another emerging challenge lies in the global supply chains that support modern computing infrastructure. Chips, firmware modules, and system components often originate from multiple vendors and manufacturing environments across different regions.

Ensuring that these components operate securely requires mechanisms that verify system integrity at every stage, from hardware initialization and firmware loading to runtime execution across distributed AI infrastructure. As AI becomes increasingly embedded in critical sectors, trust in computing systems begins with trust in the hardware and firmware layers that underpin them.

Designing for the Next Phase of AI

The pace of innovation in AI infrastructure continues to accelerate. New generations of processors and accelerators are delivering higher performance while improving energy efficiency. At the same time, security innovations are embedding trust mechanisms directly into silicon-level architectures.

Also Read: From Automation to Orchestration: Rethinking Leadership in the AI Age

AI itself is also beginning to assist in infrastructure optimization. Intelligent monitoring systems can analyze workload patterns, anticipate resource requirements, and dynamically adjust system parameters to improve efficiency and reliability.

These developments point toward a future where system architecture becomes even more central to AI success. Organizations that approach infrastructure strategically will be better positioned to scale AI responsibly and sustainably.

Also Read: Building Agile R&D Cultures to Balance Speed & Impact

Much of the public conversation around AI focuses on algorithms, models, and breakthroughs in machine learning. Yet the real foundation of AI progress lies deeper in the computing stack. Power-efficient architectures determine how far systems can scale, while secure system designs determine whether they can be trusted.

As AI becomes embedded in critical industries and national digital ecosystems, these architectural choices will shape the reliability and resilience of the digital economy. Organizations that prioritize efficiency and security at the system design level will be best positioned to support the next generation of AI innovation.